Tech gauges how brain learns faces

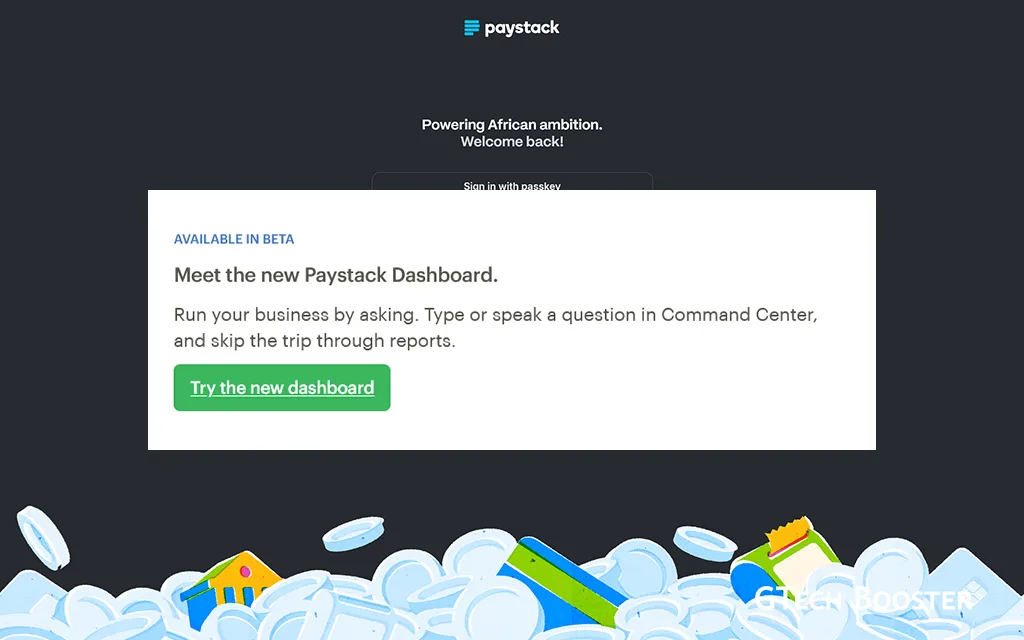

Real-world, unconstrained images like these (a) are used to train facial recognition networks. Testing for the study was done on highly controlled laser-scan data varying by viewpoint (b, columns), illumination (b, rows) and caricature-like identity strength (c). Credit: University of Texas at Dallas.

Facial recognition technology has advanced swiftly in the last five years. As University of Texas at Dallas researchers try to determine how computers have gotten as good as people at the task, they are also shedding light on how the human brain sorts information.

UT Dallas scientists have analyzed the performance of the latest echelon of facial recognition algorithms, revealing the surprising way these programs—which are based on machine learning—work. Their study, published online Nov. 12 in Nature Machine Intelligence, shows that these sophisticated computer programs—called deep convolutional neural networks (DCNNs)—figured out how to identify faces differently than the researchers expected.

“For the last 30 years, people have presumed that computer-based visual systems get rid of all the image-specific information—angle, lighting, expression and so on,” said Dr. Alice O’Toole, senior author of the study and the Aage and Margareta Møller Professor in the School of Behavioral and Brain Sciences. “Instead, the algorithms keep that information while making the identity more important, which is a fundamentally new way of thinking about the problem.”

In machine learning, computers analyze large amounts of data in order to learn to recognize patterns, with the goal of being able to make decisions with minimal human input. O’Toole said the progress made by machine learning for facial recognition since 2014 has “changed everything by quantum leaps.”

“Things that were never doable before, that have impeded computer vision technology for 30 years, became not only doable, but pretty easy,” O’Toole said. “The catch is that nobody understood how it works.”

Previous-generation algorithms were effective in recognizing faces that had only minor changes from the image they already knew. Current technology, however, knows an identity well enough to overcome changes in expression, viewpoint or appearance, such as removing glasses.

“These new algorithms operate more like you and me,” O’Toole said. “That’s in part because they have accumulated a massive amount of experience with variations in how one identity can appear. But that’s not the whole picture.”

O’Toole

O’Toole’s team set about learning how the learning algorithms operate—both to substantiate the trust put into their results and, as lead author Matthew Hill explained, to shed light on how the visual cortex of the human brain performs the same task.

“The structure of this type of neural network was originally inspired by how the brain processes visual information,” said Hill, a cognition and neuroscience doctoral student. “Because it excels at solving the same problems that the brain does, it can give insight into how the brain solves the problem.”

The origins of the type of neural network algorithm that the team studied dates back to 1980, but the power of neural networks grew exponentially more than 30 years later.

“Early this decade, two things happened: The internet gave this program millions of images and identities to work with—unbelievable amounts of easily available data—and computing power grew, so that, instead of having two or three layers of ‘neurons’ in the neural network, you can have more than 100 layers, as this system now does,” O’Toole said.

Despite the algorithm’s intended purpose, the scale of its calculations—which number at least in the tens of millions—means scientists are unable to understand everything that it does.

“Even though the algorithm was designed to model neuron behavior in the brain, we can’t keep track of everything done between input and output,” said Connor Parde, an author of the paper and a cognition and neuroscience doctoral student. “So we have to focus our research on the output.”

To demonstrate the algorithm’s capabilities, the team used caricatures, extreme versions of an identity, which Y. Ivette Colón BS’17, a research assistant and another author of the study, described as “the most ‘you’ version of you.”

“Caricatures exaggerate your unique identity relative to everyone else’s,” O’Toole said. “In a way, that’s exactly what the algorithm wants to do: highlight what makes you different from everyone else.”

To the surprise of the researchers, the DCNN actually excelled at connecting caricatures to their corresponding identities.

“Given these distorted images with features out of proportion, the network understands that these are the same features that make an identity distinctive and correctly connects the caricature to the identity,” O’Toole said. “It sees that distinctive identity in ways that none of us anticipated.”

So, as computer systems begin to equal—and, on occasion, surpass—the facial recognition performance of humans, could the algorithm’s basis for sorting information resemble what the human brain does?

To find out, a better understanding is needed of the human visual cortex. The most detailed information available is via images obtained via functional MRI, which can be used to image the activity of the brain while a subject is performing a mental task. Hill described fMRI as “too noisy” to see the small details.

“The resolution of an fMRI is nowhere near what you need to see what’s happening with the activity of individual neurons,” Hill said. “With these networks, you have every computation. That allows us to ask: Could identities be organized this way in our minds?”

O’Toole’s lab will tackle that question next, thanks to a recent grant of more than $1.5 million across four years from the National Eye Institute of the National Institutes of Health.

“The NIH has tasked us with the biological question: How relevant are these results for human visual perception?” she said. “We have four years of funding to find an answer.”